Doctors Relying on AI Became 20% Worse at Spotting Health Risks, Study Finds

AI-assistance tools have become a daily resource that has helped boost productivity and speed in several industries. Despite the notable benefits of these smart systems, medical experts are concerned that overreliance on artificial intelligence may be causing more harm than good.

In brief

- A study shows doctors relying on AI in colonoscopies may detect fewer irregularities when working without the tool.

- Research reveals AI boosts efficiency but may weaken judgment skills by discouraging deep and critical thinking.

- The Air France Flight 447 crash highlights the dangers of overdependence on automation in high-stakes environments.

- Experts stress AI’s benefits but warn industries to maintain human expertise for when automation fails.

AI Reliance May Reduce Doctors’ Detection Rates in Colonoscopies

A recent study conducted on 1,443 patients revealed that endoscopists who used AI agents during colonoscopies achieved a lower success rate in detecting irregularities when these tools were not used.

Results of the research, published this month in the Lancet Gastroenterology & Hepatology journal, show that these doctors achieved a 28.4% success rate in detecting potential polyps using the technology. Without these tools, the figure dropped to 22.4%, representing a 20% decrease in detection rates.

Dr. Marcin Romańczyk, a gastroenterologist at H-T. Medical Center in Tychy, Poland, and the study’s author expressed surprise over the results. He pointed to excessive reliance on artificial intelligence as one of the key factors contributing to the drop in detection rates.

We were taught medicine from books and from our mentors. We were observing them. They were telling us what to do. And now there’s some artificial object suggesting what we should do, where we should look, and actually we don’t know how to behave in that particular case.

Dr. Marcin Romańczyk

Not only does the result capture the potential ill effects of relying heavily on artificial intelligence, it also touches on the evolution of medical practice from an analog-focused tradition to a digital era.

Concerns Over Artificial Intelligence in the Workplace: Productivity Gains at a Cognitive Cost

Apart from the increased deployment in medical theaters and offices, AI automation has become a mainstay in workplaces, with many now turning to these tools to boost productivity. Goldman Sachs even predicted in 2023 that AI could boost workplace productivity by as much as 25% .

But adopting these AI systems also comes with the weight of eventual drawbacks. In fact, research from leading firms has highlighted the risks associated with putting too much faith in these tools.

A publication by Microsoft and Carnegie Mellon University noted that AI helped increase work efficiency among a group of surveyed knowledge workers. But it weakened their “atrophying judgment” skills and analytical ability.

Overreliance on Artificial Intelligence in Aviation: Lessons from Air France Flight 447

Even in the aviation sector, where safety is paramount, previous evidence suggests that overreliance on automation can compromise safety. In 2009, an Air France Flight 447 en route from Rio de Janeiro to Paris crashed into the Atlantic Ocean, resulting in the loss of over 228 lives.

Investigations later revealed that the plane’s automation system had developed malfunctions . Thus, this caused the aircraft’s automated “flight director” to transmit inaccurate information. And since the flight personnel were not adequately trained in manual flying, they relied on the aircraft’s automated features rather than making the necessary adjustments.

Balancing AI Adoption with Human Expertise in High-Stakes Industries

Lynn Wu, associate professor of operations, information, and decisions at the University of Pennsylvania’s Wharton School, noted that these incidents are a reality check for AI adoption in sectors where human safety is critical. Wu explained that while leaning into these technologies, industries should ensure that workers are appropriately adopting these tools.

What is important is that we learn from this history of aviation and the prior generation of automation, that AI absolutely can boost performance. But at the same time, we have to maintain those critical skills, such that when AI is not working, we know how to take over.

Lynn Wu

She added that if people lose their own skills, artificial intelligence will also perform worse. For AI to improve, individuals must also continually improve themselves.

Romańczyk also accepts the use of AI in medicine, noting that “AI will be, or is, part of our life, whether we like it or not.” Still, he emphasized the need to understand how artificial intelligence affects human thinking and urged professionals to determine the most effective ways to utilize it.

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

Bitcoin ‘Wave 3’ expansion targets $200K as sell-side pressure fades: Analyst

Market sentiment in the crypto space remains fragile; even the positive news of the "U.S. government shutdown" ending failed to trigger a meaningful rebound in bitcoin.

After last month's sharp drop, Bitcoin's rebound has been weak. Despite traditional risk assets rising due to the US government reopening, Bitcoin has failed to break through a key resistance level, and ETF inflows have nearly dried up, highlighting a lack of market momentum.

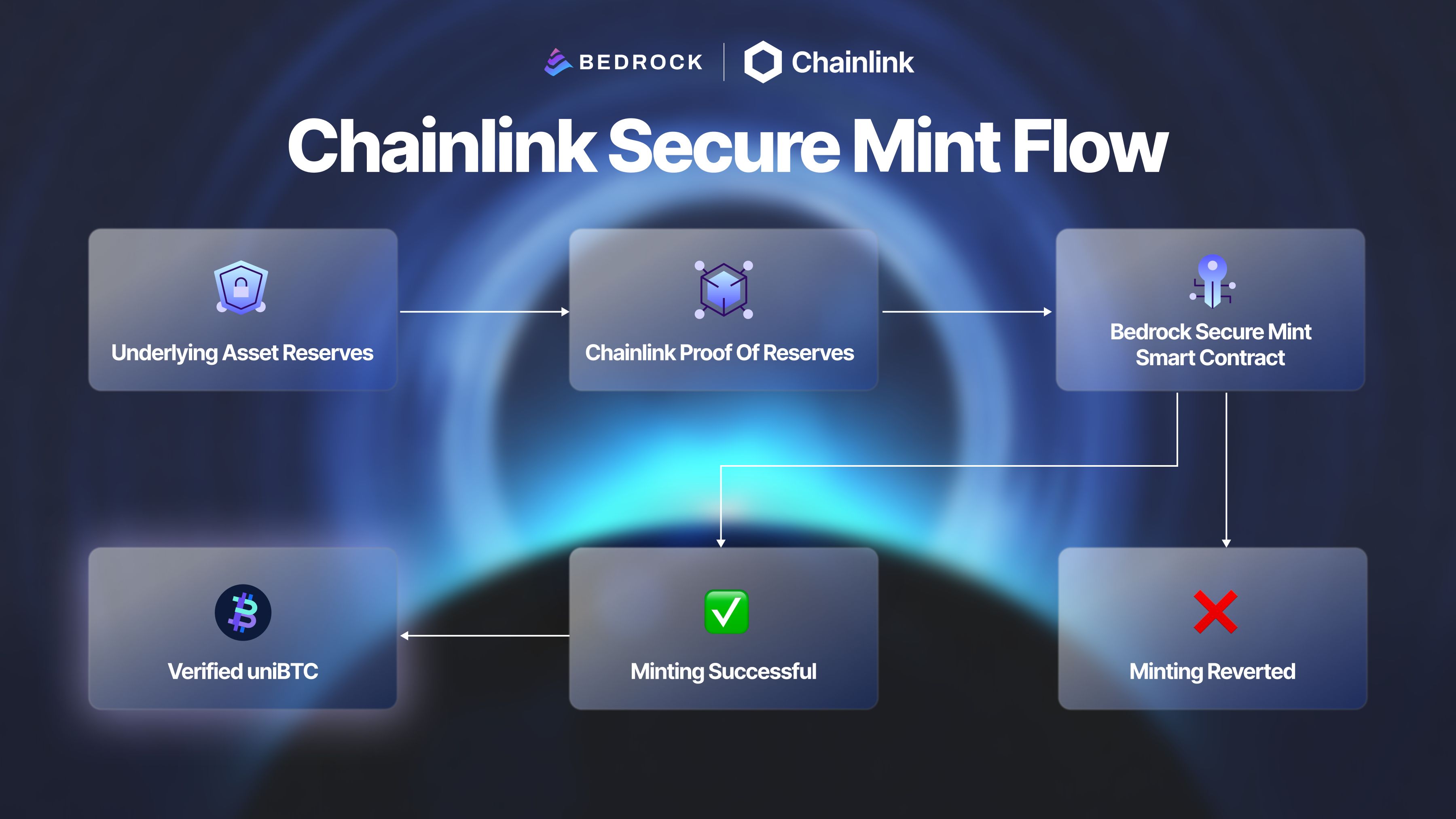

How Bedrock Strengthens BTCFi Security With Chainlink Proof of Reserve and Secure Mint