U.K. Lawmakers Warn: AI Safety Pledges Are Becoming Window Dressing

- 60 UK lawmakers accuse Google DeepMind of breaching AI safety commitments by delaying detailed safety reports for Gemini 2.5 Pro. - The company released a simplified model card three weeks after launch, lacking transparency on third-party testing and government agency involvement. - Google claims it fulfilled commitments by publishing a technical report months later, but critics argue this undermines trust in safety protocols. - Similar issues at Meta and OpenAI highlight industry-wide concerns about opa

A group of 60 U.K. lawmakers has signed an open letter accusing Google DeepMind of failing to uphold its AI safety commitments, particularly regarding the delayed release of detailed safety information for its Gemini 2.5 Pro model [1]. The letter, published by the political activist group PauseAI, criticizes the company for not providing a comprehensive model card at the time of the model’s release, which is a key document outlining how the model was tested and built [1]. This failure, they argue, constitutes a breach of the Frontier AI Safety Commitments made at an international summit in February 2024, where signatories—including Google—pledged to publicly report on model capabilities, risk assessments, and third-party testing involvement [1].

Google released Gemini 2.5 Pro in March 2025 but did not publish a full model card at that time, despite claiming the model outperformed competitors on key benchmarks [1]. Instead, a simplified six-page model card was released three weeks later, which some AI governance experts described as insufficient and concerning [1]. The letter highlights that the document lacked substantive detail about external evaluations and did not confirm whether government agencies, such as the U.K. AI Security Institute, were involved in testing [1]. These omissions raise concerns about the transparency of the company’s safety practices.

In response to the criticisms, a Google DeepMind spokesperson previously told Fortune that any suggestion of the company reneging on its commitments was "inaccurate" [1]. The company also stated in May that a more detailed technical report would be published when the final version of the Gemini 2.5 Pro model family became available. A more comprehensive report was eventually released in late June, months after the full version was made available [1]. The spokesperson reiterated that the company is fulfilling its public commitments, including the Seoul Frontier AI Safety Commitments, and that Gemini 2.5 Pro had undergone rigorous safety checks, including evaluations by third-party testers [1].

The letter also notes that the missing model card for Gemini 2.5 Pro appeared to contradict other pledges made by Google, such as the 2023 White House Commitments and a voluntary Code of Conduct on Artificial Intelligence signed in October 2023 [1]. The situation is not unique to Google. Meta faced similar criticism for its minimal and limited model card for the Llama 4 model, while OpenAI opted not to publish a safety report for its GPT-4.1 model, citing its non-frontier status [1]. These developments suggest a broader trend in the industry where safety disclosures are being made less transparent or omitted altogether.

The letter calls on Google to reaffirm its AI safety commitments by clearly defining deployment as the point when a model becomes publicly accessible, committing to publish safety evaluation reports on a set timeline for all future model releases, and providing full transparency for each release by naming the government agencies and independent third parties involved in testing, along with exact testing timelines [1]. Lord Browne of Ladyton, a signatory of the letter and Member of the House of Lords, warned that if leading AI companies treat safety commitments as optional, it could lead to a dangerous race to deploy increasingly powerful AI systems without proper safeguards [1].

Source:

Disclaimer: The content of this article solely reflects the author's opinion and does not represent the platform in any capacity. This article is not intended to serve as a reference for making investment decisions.

You may also like

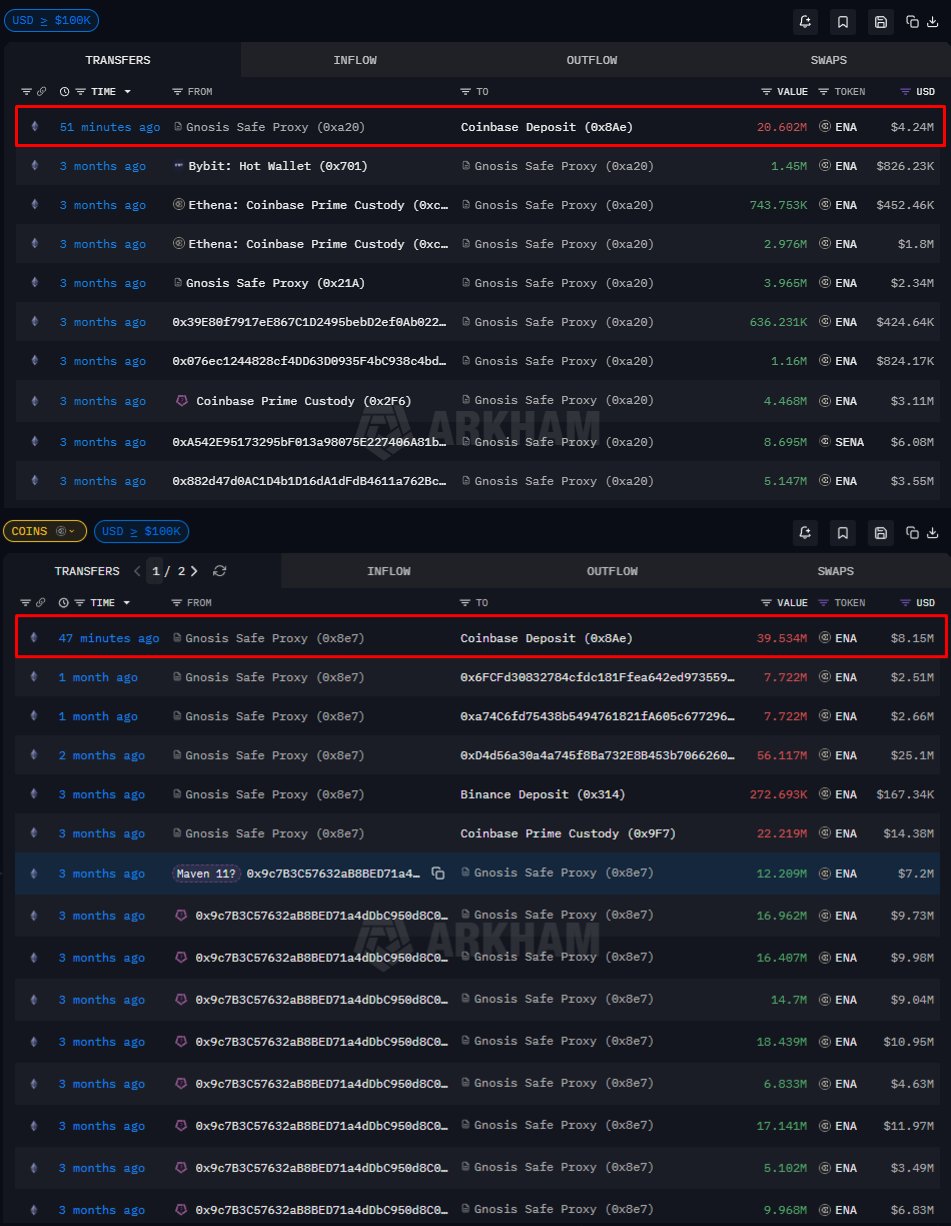

Can Ethena hold $0.20 after 101M ENA flood exchanges?

Galaxy Digital, Which Manages Billions of Dollars, Reveals Its Bitcoin, Ethereum, and Solana Predictions for 2026

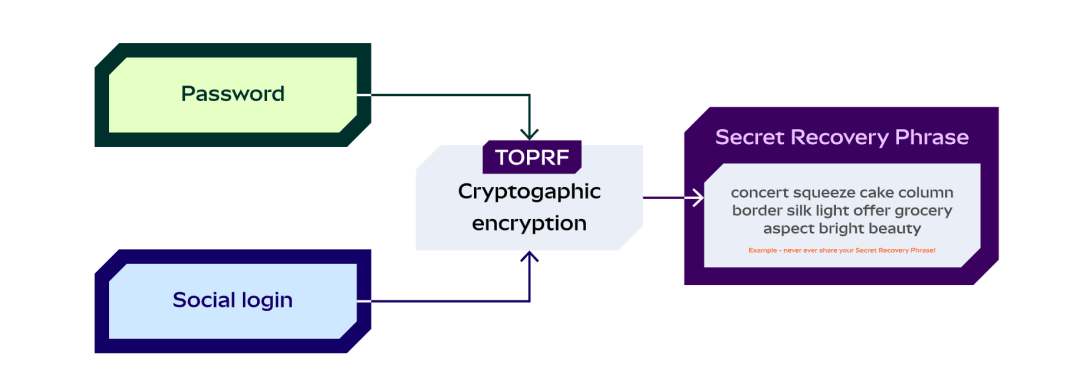

A Brief History of Blockchain Wallets and the 2025 Market Landscape

Tom Lee responds to X's debate with Fundstrat over differing bitcoin outlooks